Received 23 May 2016; accepted 12 July 2016; published 15 July 2016

1. Introduction

The bin picking operation by a robot is one of the most typical tasks in the industrial line and the home. However, the equipment which makes the objects stand in line exists in the industrial line, but it doesn’t exist in the home. The objects are often put miscellaneously in the home. Therefore robot has to recognize the location of the object and grasp the object selectively. It’s necessary to consider a collision problem with other objects at this time. Recognition of the environment of 3-D is needed to recognize the object of the target from the objects put miscellaneously.

LRF [1] is used as the sensor which measures the environment of 3-D, and recognizes the object by model- base [2] - [4] . The point cloud is captured by LRF. There are a lot of research as which an object is recognized using point cloud such as [5] - [7] . As the method to add information to the each point, Johnson and Kang included extra information such as colors in point cloud [8] . Akca included extra information such as intensity values in point cloud [9] . As the method to add information to the key-point of point cloud, Tombari et al. proposed signature of histograms of orientation [10] [11] . Rusu et al. proposed point feature histograms [12] . Sun et al. proposed point fingerprint which use geodesic circles around the reference point as description [13] . As the method to describe a relation during several feature points, Drost et al. proposed the method to make recognition efficient by the hash table [14] .

As for the research on bin picking, [15] , [16] assumed the shape primitives to an unknown object. Morales et al. proposed the 2-D segmentation method to identify the object [17] . There are a lot of researches to grasp planning problem such as [18] - [22] . Sanz et al. assumed the 2-D model and planned the grasping point on the object by using the ellipsoid [23] . Fuchs et al. proposed the bin picking method of cylindrical objects [24] .

In this paper, we recognize the position and posture of the object using ICP algorithm [24] - [27] . The grasping point on the recognized object is searched in the operating range of the two fingered robotic manipulator, and an approach path to grasping point is searched. The sub-targets are used to evade the collision problem at this time. Several sub-targets are defined, and avoidance of the collision problem is made efficient by searching path via the sub-targets. The pick-and-place motion seems to become safer and certain using this system.

2. The Outline of System

2.1. Introduction of System

In this paper, we assumed bin picking operation using the two fingered robotic hand in the home, and target objects are placed on the flat face. 3-D information on those object is acquired using the LRF sensor. The LRF sensor is installed in the robotic manipulator such as Figure 1. ΣA is the coordinates of the robotic manipulator, and ΣB is the coordinates of the LRF sensor. The position and the posture of the objects are recognized as the 3-D information using a known model. The approach path to the recognized object is searched.

This system has the following 4 phases. Every time the object is taken, these are repeated. A flow diagram of this system is shown on Figure 2.

PHASE 1: 3-D measurement of the objects.

PHASE 2: Recognizing of the position and the posture of the object.

PHASE 3: Searching of the grasping point on the recognized object.

PHASE 4: Searching of the approach path to the grasping point on the recognized object.

2.2. 3-D Measurement of the Objects (PHASE 1)

LRF is being installed on the wrist of the 6-DOF robotic manipulator such as Figure 1. The target objects are measured while changing a viewpoint freely using the 6-DOF robotic manipulator. This measurement is performed from the various directions to reduce an occlusion problem [28] . This scanning operation can generate information on the 3-D environment which consists of 3-D point clouds such as Figure 3.

2.3. Recognizing of the Position and the Posture of the Object (PHASE 2)

In this phase, the posture and the position of the object is presumed using the 3-D point clouds measured in PHASE 1. This system is premising that the size of the object and the shape are known such as Figure 4. A near point is searched based on ICP algorithm between the model point group and the measurement point group of

![]()

Figure 1. A scanning process using the LRF sensor.

![]()

Figure 2. The flow diagram of the system.

![]()

![]() (a) (b) (c)

(a) (b) (c)

Figure 3. An example of 3-D point cloud. (a) An environment; (b) point clouds; (c) without floor.

![]()

![]() (a) (b)

(a) (b)

Figure 4. An example of the object model. (a) A square pillar; (b) a model of square pillar.

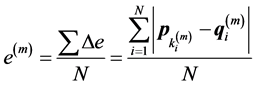

the object prepared beforehand. Equation (1) is the evaluation function.

(1)

(1)

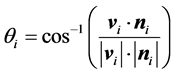

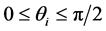

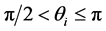

Here, p is the position vector of the point clouds measured by LRF. q is the position vector of the point clouds prepared for a model. N is the number of the point group used by a model. Δe is the distance between the corresponding points. m is the number of times of the repeat calculation. ki is the number which corresponds to a model point. In this paper, to correspond to an occlusion problem, every time the posture of the model is changed, the number of the used model points N is changed such as Figure 5. Because the number of the points N seen from LRF changes with a change in the position of the model and posture. It’s difficult that ICP algorithm corresponds to this data which doesn't have many overlap points [29] . A used point is judged by the following Equation (2).

(2)

(2)

: Point is unused.

: Point is unused.

: Point is used.

: Point is used.

Here,  is vector from view point to the model point.

is vector from view point to the model point.  is face normal vector of the model point.

is face normal vector of the model point.

2.4. Searching of the Grasping Point on the Recognized Object (PHASE 3)

In this phase, the grasping point on the recognized object is searched for the two fingered robotic hand. The model when used by recognition have the axis as information for grasping such as Figure 6. The search start

![]()

![]()

![]() (a) (b)

(a) (b)

Figure 5. The principle of occlusion estimation.

![]()

Figure 6. The axis for grasping (cylinder model).

position of robotic hand is decided using this axis for grasping such as Figure 7. Search of the grasping point is simplified by using this axis. X, Y, and Z are direction vector of each coordinate axis of the axis for grasping. XE, YE, and ZE are direction vector of each coordinate axis of the robotic hand. First step: The direction of XE is made parallel to Z. Second step: A point on the closest circumference is searched. Third Step: ZE and C is made identical. C is direction vector to the axis for grasping from the closest point. The grasping point is decided by using 5 patterns of search direction such as Figure 8. First pattern is horizontal direction horizontal direction to the axis for grasping such as Figure 8(a). Second pattern is the pitch direction to the axis for grasping such as Figure 8(b). Third pattern is the roll direction to the axis for grasping such as Figure 8(c). Forth pattern is the yaw direction at the termination of the axis for grasping such as Figure 8(d). Fifth pattern is the pitch direction at the termination of the axis for grasping such as Figure 8(e). A closest point to the robot is chosen as the grasping point in the operating range of the robot finally.

It’s necessary to consider collision problem with the objects besides the target object. Collision problem can’t be avoided by the situation that a lot of objects are put miscellaneously. Interference model of the robotic manipulator is used to collision problem such as Figure 9. An example of judgment of interference is shown on Figure 10. A finger of the robotic hand and a lower object are interfering on Figure 10(a). The result which evaded interference is shown on Figure 10(b).

![]() (a) (b) (c)

(a) (b) (c)

Figure 7. Decision procedure of the posture of the robotic hand. (a) XE is made parallel to Z; (b) searching a point on the closest circumference; (c) ZE and C is made identical.

![]()

![]() (a) (b)

(a) (b)

Figure 9. The standard search pattern of the gripping point. (a) Interference model; (b) interference with the point cloud.

![]()

![]() (a) (b)

(a) (b)

Figure 10. An example of judgment of interference. (a) With interference; (b) without interference.

2.5. Searching of the Approach Path to the Grasping Point on the Recognized Object (PHASE 4)

In this phase, the approach path from the waiting position of the robotic manipulator to the grasping point is searched. The approach path is searched basically by inverse kinematics. Some approach path estimates the movement cost from the waiting posture using the Equation (3).

![]() (3)

(3)

![]() S is the total of the fluctuation amount of each joint from the waiting position. This is estimated as the cost of each approach path. Δ

S is the total of the fluctuation amount of each joint from the waiting position. This is estimated as the cost of each approach path. Δ![]() i is the change amount of the angle of each joint from the waiting position. The minimum

i is the change amount of the angle of each joint from the waiting position. The minimum ![]() S is selected as the approach path in the operating range.

S is selected as the approach path in the operating range.

Search of the approach path has to consider collision problem like search of the grasping point. In this paper, the sub-targets are used to evade this problem. Several sub-targets are prepared beforehand by user. Collision problem is evaded by searching approach path via the sub-target such as Figure 11.

3. Experiment of Bin Picking to the Stacked Objects

The Planned motion was inspected by the simple experimental environment to confirm our proposed method. The experiment of bin picking in the environment that three objects exist is shown on Figure 12. Those objects are stacked such as Figure 12(a).When each object has contact, a segmentation of each object becomes difficult. The shape of the target object is a cylinder. The axis for grasping is set as the center of the cylinder such as Figure 6. Therefore all 5 patterns of the basis (Figure 8) is used in search of the grasping point. In Figure 12(c), the results which were measured from 4 direction (front, up side, left side, right side) was integrated. The measuring result have about 9000 points.

3.1. The Recognition Result of the Objects

A model of the cylinder used for recognition is shown on Figure 13. The threshold of e(m) is set as 10 mmequal to the official precision of LRF. 36 of convergent value was obtained. 11 results became lower than the threshold. 6

![]() (a) (b)

(a) (b)

Figure 11. An example via the sub-target. (a) Without sub-target; (b) with sub-target.

![]() (a) (b) (c)

(a) (b) (c)

Figure 12. The experimental environment and measuring result. (a) The experimental environment; (b) target objects; (c) measuring result.

results in 11 results converged on an upper object of the stacked objects such as Figure 14(a). It’s because the number of the data which shows the upper object is large, and the shape of the upper object can be measured more correctly. 5 remaining results converged on each lower objects of the stacked objects such as Figure 14(b). All except for those results will be miss detection such as Figure 14(c). Those results are the local minimum. The result of recognition of Figure 14(a) is shown on Table 1. The upper object is chosen as the first target, because we’d like to take the object from upper part of the stacked objects in turn by pick-and-place motion.

3.2. The Motion Planning of the Robot and the Execution Result

A motion plan is performed to the first target. The search result of the grasping point is shown on Figure 15(b). The search result of the approach path is shown on Figure 15(c). The execution result by the actual robotic manipulator is shown on Figure 15(d). We succeeded to pick up the upper object from the stacked objects without colliding with the lower objects.

After the first target was taken up, the motion plan to the remaining object is performed. It’s necessary to re-measure to get the partial information which is hidden by the first target. The partial information is integrated and it’s re-recognized. An object near the robot is chosen from the remaining objects as the next target. The experimental result for the second target is shown on Figure 16. The experimental result for the third target is shown on Figure 17. The result of re-recognition of the second target and the third target is shown on Table 2. We succeeded to take each object from the stacked objects in turn. From this result, we consider it’s applicable in the environment that many objects exist, and this motion planning method is effective to the pick-and-place motion.

![]()

![]()

![]() (a) (b) (c)

(a) (b) (c)

Figure 14. An example of the recognition result. (a) Matched (e = 7.2 mm); (b) matched (e = 9.6 mm); (c) unmatched (e = 12.3 mm)

![]()

Table 1. The recognition result of the position and the posture [(a) in Figure 13].

![]()

Table 2. The recognition result of the position and the posture [Figure 15 and Figure 16].

4. Conclusion

This paper proposed the method of the motion planning for pick-and-place motion of robotic manipulator in the environment that a lot of objects are put miscellaneously. The 3-D information measured by LRF is used for the motion planning as the 3-D point cloud. The objects were recognized using ICP algorithm. We use the axis which gave it to the model for search of the grasping point. Search of the grasping point is simplified by using this axis. Collision problem can’t be avoided by the situation that a lot of objects are put miscellaneously. But the collision problem was evaded by using interference model and the sub-targets. The motion planning was performed using these methods. We succeeded in pick-and-place motion by the actual robotic manipulator in the environment that some objects were stacked. We are considering distinction of the object with the various shapes, countermeasure for improvement of the recognition efficiency, and improvement of the precision of the system.