1. Introduction

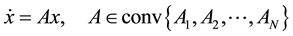

Let linear uncertain system

(1)

(1)

be given where ,

,

are

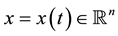

are  real matrices. Consider the following matrix inequalities

real matrices. Consider the following matrix inequalities

(2)

(2)

where  and the symbol “

and the symbol “ ” stands for positive definiteness. The matrix P is called a common solution to (2).

” stands for positive definiteness. The matrix P is called a common solution to (2).

If the system ( 2) has a common  solution, then this system is uniformly asymptotically stable [1] .

solution, then this system is uniformly asymptotically stable [1] .

The problem of existence of common positive definite solution P of (2) has been studied in a lot of works (see [1] - [7] and references therein). Numerical solution for common P via nondifferentiable convex optimization has been discussed in [8] .

In the first part of the paper we treat the problem (2) as a nonconvex optimization problem (minimization of a convex function under nonconvex constraints) and apply a modified gradient method. The comparison with [8] shows that our approach gives better result in some cases.

In the second part we consider the stabilization problem, i.e. the following question: for the affine family

where  is a box, is there a stable member? We consider a sufficient condition which follows from the Bendixson theorem [9] .

is a box, is there a stable member? We consider a sufficient condition which follows from the Bendixson theorem [9] .

2. Gradient Method

According to [2] , let  be the set (subspace) of

be the set (subspace) of  dimensional symmetric block-diagonal matrices of the form

dimensional symmetric block-diagonal matrices of the form  where

where  is symmetric.

is symmetric.

Let  be a basis of

be a basis of![]() ,

, ![]() ,

,

![]()

![]() (3)

(3)

Then ![]() has CQLF

has CQLF ![]() there exists

there exists ![]() such that

such that![]() . In this case the matrix

. In this case the matrix ![]() is a common solution to (2) where

is a common solution to (2) where

![]()

The function ![]() is positive homogenous

is positive homogenous![]() . Therefore the vector

. Therefore the vector ![]() can be restricted to the condition

can be restricted to the condition![]() . The advantage of the restriction

. The advantage of the restriction ![]() shows the following proposition.

shows the following proposition.

Proposition 1. Let ![]() be the unit sphere, let the function

be the unit sphere, let the function ![]() be positive homo-

be positive homo-

geneous ![]() and be differentiable at

and be differentiable at![]() . Assume that

. Assume that![]() . Then

. Then ![]()

where![]() ,

, ![]() denotes the gradient and

denotes the gradient and ![]() denotes the scalar product.

denotes the scalar product.

Proof: Since ![]() is positive homogeneous, it increases in the direction of the vector a: for

is positive homogeneous, it increases in the direction of the vector a: for![]() ,

,

![]() .

.

Therefore the directional derivative of f at a in the direction of a is positive ![]()

On the other hand

![]()

and

![]() □

□

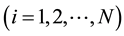

Proposition 1 shows that under its assumption the minus gradient vector at the point a is directed into the unit ball (Figure 1).

Consider the following optimization problem

![]()

![]()

Figure 1. The direction ![]() of the minus gradient.

of the minus gradient.

Since the matrix ![]() is symmetric, the function

is symmetric, the function ![]() (3) can be written as

(3) can be written as

![]()

The gradient vector of ![]() at a point a is:

at a point a is:

![]() (4)

(4)

where ![]() is the unit eigenvector of

is the unit eigenvector of ![]() corresponding to the simple maximum eigenvalue [2] .

corresponding to the simple maximum eigenvalue [2] .

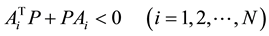

Well-known gradient algorithm in combination with Proposition 1 gives the following.

Algorithm 1.

Step 1. Take an initial point![]() . Compute

. Compute![]() . If

. If![]() , find t such that the line

, find t such that the line

![]()

intersects the unit sphere ![]() (Figure 2).

(Figure 2).

Step 2. Take ![]() where

where ![]() satisfies the condition

satisfies the condition![]() . If

. If![]() ,

, ![]() is re-

is re-

quired point. Otherwise find t such that the line ![]() intersects the unit sphere and repeat the procedure.

intersects the unit sphere and repeat the procedure.

Example 1. Consider the switched system

![]()

where

![]()

are Hurwitz stable matrices. Let

![]()

![]()

For ![]()

![]()

Take the initial point![]() , then

, then

![]()

is positive definite. Eigenvalues of the matrix

![]()

are ![]()

Maximum eigenvalue 4.015 is simple and the corresponding unit eigenvector is

![]()

Gradient of the function ![]() at

at ![]() is

is

![]()

The vector ![]() should be on the six dimensional unit sphere. Therefore

should be on the six dimensional unit sphere. Therefore ![]() and

and

![]()

After 9 steps, we get ![]() where

where

![]()

![]()

![]() is a common positive definite solution for

is a common positive definite solution for ![]() and

and![]() .

.

The same problem solved by the algorithm from [8] gives answer only after 70 steps. We have solved a number of examples using the above gradient algorithm and by the algorithm from [8] . These examples show that this algorithm is faster than the algorithm from [8] in some cases.

As the comparison with the algorithm from [8] is concerned, the algorithm from [8] at each step uses the gradient only one maximum eigenvalue function, i.e. at 1 step it uses the gradient of![]() , at 2 step the gradient of

, at 2 step the gradient of ![]() and so on. This procedure delays the convergence. In our algorithm we use the function

and so on. This procedure delays the convergence. In our algorithm we use the function ![]() and the corresponding gradient direction decreases the greates maximum eigenvalue.

and the corresponding gradient direction decreases the greates maximum eigenvalue.

On the other hand an obviously advantage of the method from [8] is the choose of the step size, which is given by an exact formula, whereas our step size is determined by the intersection of the corresponding rays with the unit sphere.

3. Sufficient Condition for a Stable Member

In this section we consider a sufficient condition for a stable member which is obtained by using Bendixson’s theorem.

If a matrix is symmetric then it is stable if and only if it is negative definite. Therefore if a family consists of symmetric matrices then searching for stable element is equivalent to the searching for negative definite one.

On the other hand every real ![]() matrix A can be decomposed

matrix A can be decomposed

![]()

where B is symmetric and C is skew-symmetric. Bendixson’s theorem gives important inequalities for the eigenvalues of A, B and C.

Theorem 1. ([9] , p. 40) If A is an ![]() matrix,

matrix, ![]() and

and ![]()

![]() ,

,

![]() are the eigenvalues of A, B then

are the eigenvalues of A, B then

![]()

Bendixson’s theorem leads to the following.

Proposition 2. Let the family ![]() be given and

be given and ![]() is the symmetric part of

is the symmetric part of![]() . Then

. Then

1) If there exists ![]() such that

such that ![]() is Hurwitz stable then

is Hurwitz stable then ![]() is also Hurwitz stable,

is also Hurwitz stable,

2) If there exists ![]() such that

such that ![]() is positive stable (all eigenvalues lie in the open right half plane) then

is positive stable (all eigenvalues lie in the open right half plane) then ![]() is also positive stable.

is also positive stable.

Proposition 2 gives a sufficient condition for the existence of a stable element.

In the case of affine family

![]()

where![]() ,

, ![]() is a box or

is a box or![]() , the searching procedure for stable element in

, the searching procedure for stable element in ![]() can be effectively solved by powerful tools of Linear Matrix Inequalities (Matlab’s LMI Toolbox).

can be effectively solved by powerful tools of Linear Matrix Inequalities (Matlab’s LMI Toolbox).

In the non-affine case of the family ![]() the gradient algorithm for a stable element in

the gradient algorithm for a stable element in ![]() is applicable.

is applicable.

Example 2. Consider affine family

![]()

![]() . Then

. Then

![]()

LMI method applied to the matrix inequality problem ![]() gives the value within a few seconds

gives the value within a few seconds

![]()

and![]() , and consequently

, and consequently ![]() is stable.

is stable.

LMI method applied to the inequality ![]() gives also

gives also

![]()

so the family ![]() contains positive stable matrix

contains positive stable matrix![]() .

.

We have investigated Example 2 by the algorithm from [10] and positive answer is obtained after about 100 seconds.

Example 3. Consider non-affine family

![]()

![]() . Here

. Here

![]()

Consider the function

![]()

We are looking for ![]() satisfying

satisfying![]() . If for some

. If for some ![]() the maximal eigenvalue

the maximal eigenvalue ![]() is simple then

is simple then ![]() is differentiable at

is differentiable at ![]() and its gradient can be easily calculated (by the analogy with (4)).

and its gradient can be easily calculated (by the analogy with (4)).

For this example, gradient method gives solution after 7 steps:

![]()

(see Table 1). The step size t is chosen from the decreasing condition of the function![]() : t must be chosen such that

: t must be chosen such that

![]()

This example has been solved by the algorithm from [10] as well. Positive answer has been obtained only after

![]()

Table 1. Gradient algorithm for example 3.

55 steps. We start with ![]() and the algorithm from [10] gives another stabilizing point

and the algorithm from [10] gives another stabilizing point

![]() .

.

The eigenvalues of ![]() are

are![]() ,

,![]() .

.

4. Conclusion

In the first part of the paper, we consider the stability problem of a matrix polytope through common quadratic Lyapunov functions. We suggest a modified gradient algorithm. In the second part by using Bendixson’s theorem a sufficient condition for a stable member is given.

NOTES

*Corresponding author.